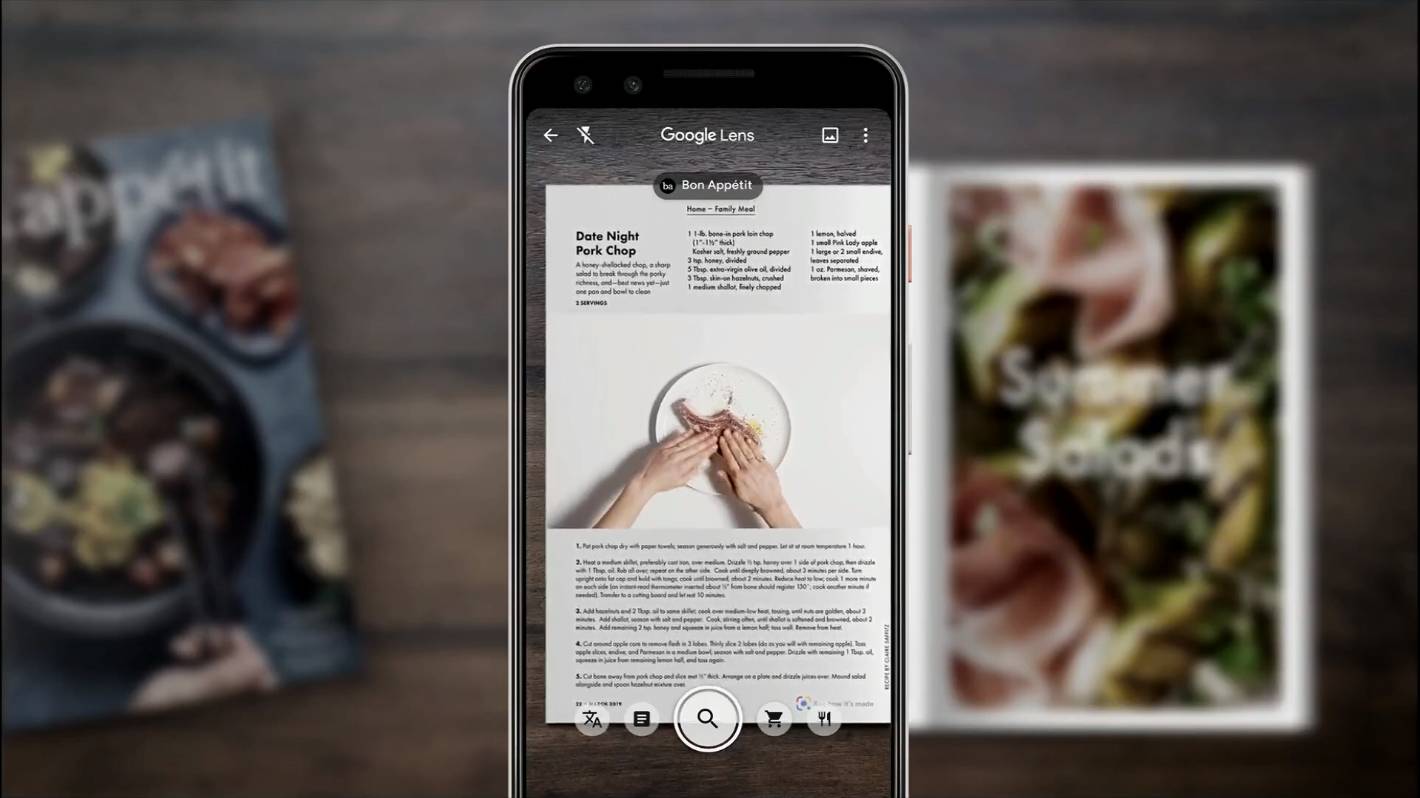

Today, we’re in much the same place with our smartphone cameras: We need dedicated apps. Later, we built more powerful tools that let us use one tool for multiple functions. Initially, every design change or image manipulation required a separate programmed routine or application. In some sense, the advent of Google Lens reminds me of the early days of computer graphic design. I anticipate that developers and companies will build apps that integrate image recognition tasks into workflows: pick up your phone, point-and-talk to recognize a product, change a status in a system, and push a notification to a colleague. Developers of smart camera-reliant apps need to make sure to create value beyond object or element recognition. Google Lens may have significant implications for developers and companies. Is the system supposed to find fonts? Extract a phone number? Identify colors? Your words help narrow the field of potential results. Your request, whether spoken or typed, essentially serves as an app-switcher. It’s easy to envision a future where you pick up your device, point it at something, say “Hey Google, what is this font?” then see or hear a response. The promise of Google Lens is that we can point-and-talk (or point-and-type). (Yes, I tried it.) The software looks for particular patterns to identify and match. A music recognition app rejects a photo of a dog as unplayable. An app that extracts business card contact information doesn’t identify wines, recognize dogs, or solve math problems. Then tap the app, point the phone, and capture the image every time you want to use the camera for that task.

To use each feature you first have to install an app.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed